- The camera is just very small, my pinky has nowhere to go

- Some options like Auto-ISO are pointlessly hidden in menu layers

- The low-light performance could be better

- Weather-sealing, anyone?

Admittedly, these are very minor points and I can still comfortably use it, so I’ve been in no hurry to upgrade. Yet when a friend of mine went on vacation, I asked whether I can play with his camera during these three weeks to figure out for myself whether I want something like this. My friend shoots in a very different fashion compared to what I do, so what works for him might not work for me and vice versa.

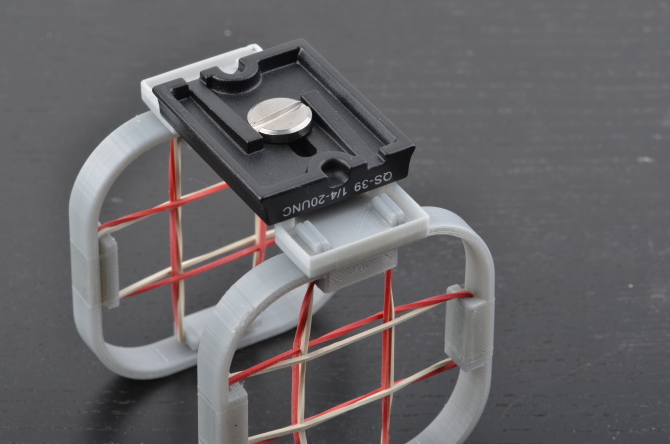

Here it is, a Nikon D800. Added a Tamron 70-200mm f/2.8 for scale and for you to judge how large or tiny my dick is.

Likes

I liked quite a few things:

- It feels great in hand. Yes, it is large, but switching between this monster and the D5100 the latter feels completely like a toy

- It’s heavy. Most people I’ve handed it to considered it too heavy, but after putting it on a scale in a discount super market (2600g including the 70-200), I consider it a feature because it balances nicely. But you do need a decent strap.

- The viewfinder is fantastic. As in: amazingly good. I can see things in the viewfinder. It’s much larger and brighter and a lot more comfortable. Hard to say whether the feel in hand or the large viewfinder are my favorite features.

- Despite being an early model on which the auto-focus is said to be wonky, it worked fine for me. The 51 AF spots were nice, though very much in the center of the frame. The 3D tracking, once I figured out how it works was quite impressive. Even with 3rd party lenses, the focussing was surprisingly quick.

- It has more options than you can shake a stick at. Pretty much everything is customizable. There’s so many option buttons which I didn’t end up using since there was already a button for everything and the kitchen sink.

- In particular, the Auto/Manual ISO function can be switched easily using the buttons and wheels. Unlike the D5100 which hides said option deeply inside its settings.

- It has weather sealing and is built to last. I don’t tend to baby my electronics so some extra robustness is very much welcome.

- The pop-up flash feels nice and solid. Never used it but its mechanics give it a much more high-end feel than the cheap pop-up on my small DSLR.

Nolikies

Not everything is gold and rainbows with the D800.

- The camera shoots slowly. 4 frames per second is the same speed as with my entry-level D5100. Of course it shoots with 36 megapixels, not 18, but I would rather have less megapixels and a faster frame rate. Canons 70D shoots 7 fps which is roughly the speed I would like to have. No need for immense frame rates like the 7D, D500, 1DX or D5. But 4 is a tad low.

- The files are massive. 15 MB JPEG plus some 45 MB RAW. This also takes forever to write to card. I could switch to lower res, but that would probably lead to regrets of taking the perfect picture but in potato quality. Also, it wouldn’t make the camera any faster.

- CompactFlash slot. I’d rather have a fast SD slot or a XQD slot. The CF/SD mix is unpractical or expensive or obsoleted while not being massively fast.

- The white balance is wonky. Maybe that’s the fault of my Tamron lens, but sometimes the JPEGs came out in a weird way, mostly too red-ish. Attempting to switch to different modes did not help much. Can be fixed in post, but still annoying.

- The multi-direction button in the back is in a bad spot because my nose keeps pressing the left direction pad, making the AF point wander to the left. Took me a few days to understand why the point was moving to the left all the time. Even considered that the AF might be broken. But no, it was my nose. Alternatively, it’s a problem with my nose and I should get another one.

- The “pro” button layout is not as good as expected: it has four options (ISO, bracketing, white balance and quality), two of which are rarely used (bracketing and quality) and one (white balance) should not need as much use, since on the D5100 I almost never need to touch it so I don’t know why the D800 is such a massive step back.

- Since the 4 button thing occupies the spot where the mode wheel is on “consumer” bodies, the mode is set using a switch on the other side which is in an awful position and hard to reach.

- The shutter sounds like mashing the lid of a dumpster, it’s that loud. I suppose I can’t use it at night since the neighbors might be calling the police.

- No infrared receiver? Come on Nikon, you cheaped out on a 50ct part? Even my D5100 has two (2!) of them, very useful for triggering it on a tripod. The remotes are cheap and reliable (heck, even my phone has infrared) so I have zero understanding why I would need to add an ugly external one

- There is no distinction between AF points when in portrait and landscape orientation. Saw this ingenious feature on the Canon 70D and loved it right away.

Conclusions

I wrote down many more complaints than praise, which probably does not do the camera justice, but for a device I would need to pay roughly 1000€ (used, at the time of writing) I want to nail it. And the D800 is by no means a bad camera, but after three weeks of testing I am confident to say that it’s not going to be my next camera. Generally, I very much enjoyed having the camera over for longer as it allowed me to get to know it much better and test it in various occasions. I consider D800 a great camera for studio work (which incidentally is exactly like it is used in its day-job) where a high resolution is desired and the light is well controlled.

What are my options? For now I will be staying with the D5100 since apart from the few complaints above it still delivers awesome images and in certain regards tops the D800. But the nagging voice still remains, so the next camera I’d love to test is the D750 which seems to be a perfect match spec-wise.

]]>